For our system we have 3 separate companies. We have a VPN tunnel that allows two of the companies to access our app server/database to get into Epicor. Question is, if we were to spin up separate app servers at the other locations, would that improve speeds at all or would the traffic between the app server/database still be the bottleneck? Would database replication be an option so we would have the database stored at each location and replicated? Just wondering what the best practice would be and how we can improve performance.

You want the lowest latency possible between app server and database. In my experience you’ll have much better performance accessing the app server via VPN from the client than if you had a “local” app server accessing the database via the VPN tunnel. I tried it, the results were no good and we went back to a single app server and database setup.

I think there are options to have multiple app servers and a local database replica that is used at least for reads, and writes back to the primary database. I have not actually tried it.

I have a location in the UK, two in the Netherlands, single people in 7 other countries, 3 physical locations in the US and at least 20 remote workers in the USA at any given time. ALL of them access via a direct VPN to the main office with a single appserver and separate DB server. It works fine. We do have a secondary appserver (hot spare kind of thing) ready to go just in case.

There is variable latency from each of the ISP’s involved, as well as on the redundant ISP links we have here in the main office. There really isn’t much we can do about any of it. We’re dying to get into browser mode with Kinetic to gain some performance, but everyone is dealing with the occasional downtime or loss of the VPN the best we can.

We have 41 physically distant sites across the country and 8 other international locations. We run in azure 3 appservers behind a load balancer all connect VPN site to site

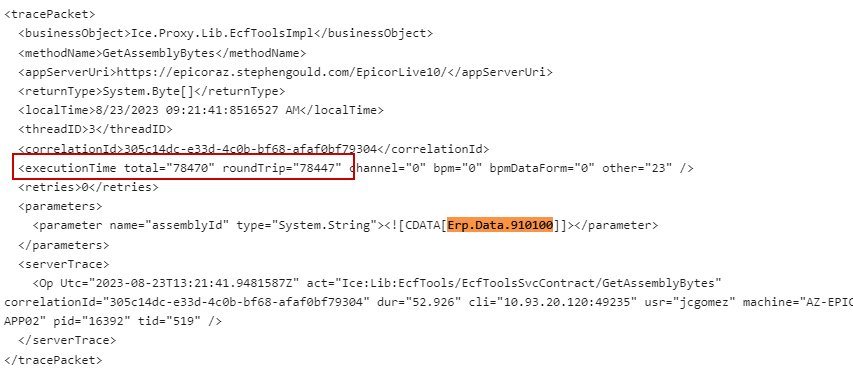

Works fine, in 2023 Epicor moved their BPM engine to the web and introduced a call that gets made on the client to get server side DLLs that has bit us in the ![]()

They call GetFile (Erp.Data.910100) from the client… that file is 40+MB in size on an 8Mbit connection (that’s 1/ MEG a sec) it takes 40 seconds to open the VPN screen… Not thrilled

Seriously considering going to the WAN for a direct to azure connection from client instead of piping through VPN…

But overall epicor works fine, just a little sluggish

LOL - since they created Epicor 9…

but seriously…

Big or small footprint, we’re all doing something that works the best for our scenarios and when Epicor

changes again, we’ll all change too. Be dynamic in your thinking, and plan to change again.

@josecgomez could you elaborate a bit on the app server configuration with a load balancer? Do you configure the app servers with the local server’s name and the load balancer handles a common name? for example, normally on a single server in EAC under server management it would look something EpicorAppServer1.example.com and the instances listed below. The URL would be https://EpicorAppServer1.example.com/EpicorInstance

Are your clients connecting to something like https://Epicor.example.com/EpicorLive and the load balancer is determining which one they connect to?

https://EpicorAppServer1.example.com/EpicorInstance

https://EpicorAppServer2.example.com/EpicorInstance

https://EpicorAppServer3.example.com/EpicorInstance

Alternatively is there a way to do something similar to this without the load balancer where we can just use a DNS setting to manually switch over, more for failover? The key here would be to have the friendly DNS name set in the EAC on each server so that if something happens to one, we can just point DNS to a second server.

In all of these scenarios, I assume you are using a wildcard certificate for all of them, something like *.example.com

Nice find

Our setup is pretty straight forward

AppServers:

Epicor-App01.TLD.COM

Epicor-App02.TLD.COM

Epicor-App02.TLD.COM

Load Balancer

EpicorLB.TLD.COM

Clients all connect to EpicorLB.TLD.COM and the loadbalancer does round robbing (or whatever you choose) to the 3 app servers. This is all managed at the Load Balancer

Yes we use a wildcard cert for all 3 appservers (and the load balancer)

You could do a Poor Mans load balancer by using DNS as you stated. IF you have regions that you want to push to one or another you could just have a local DNS cache respond with the IP for APP01 or APP02 depending on the region but that’s less load balancing and more traffic shaping

Thanks. Any documentation you have seen related to load balancing or any tricks you have seen? The reason I asked is I thought Epicor had some requirements for persistent sessions, but I could be wrong there? Just wondering if there are any other requirements around load balancers.

How do you handle the task agents for load balancing? Do you have an appserver4 for those tasks and reports?

Epicor as of 2022.X has gone fully RESTFul so the session is passed as a header and there’s no “connected” state as long as the session ID is passed around it will “know” who you are etc

We just point the task agent to one of the AppServers no need for load balancing there you can have up to 3 task Agents running on the same Db Instance and frankly that should be plenty.

You don’t really need to pipe the clients through the VPN, just the appserver to DB connection… You could have one app server at each site connecting to a single database server through a VPN. Disadvantage being that if the internet or VPN goes down, both remote sites go down completely…

You better have a nice robust high bandwidth, low latency connection.

Yeah, tried just the app server on site and couldn’t even get the client connected it was so slow. The app server is on west coast and the SQL server is on the east coast. There are a few issues we are trying to solve.

1 the farther you travel west, the slower Epicor client gets.

2 client deployment and upgrades are going to take a long time with the auto updater. 6-20 minutes across the country. I was hoping using a local app server would solve that, but too much latency as @klincecum pointed out.

3 we want to build in some failover that if an app server goes down clients can reconnect to another.

Eventually we will have to figure out a few sites outside the US, but for now the main concern is the 14 sites within the US. So far @josecgomez solution may be the only one I have found to meet most of the needs. I’m not sure which would be the most cost-effective Azure vs building it out on our own.

The deployment server for your client doesn’t have to be the same as the AppServer. We use FTP to deploy our clients and its a completely separate server (that one can be done via DNS to pick the closest node)

1 Deployment Server on East Coast

1 Deployment Server on West Coast

1 Deployment Server in Europe

all pointing to kineticdeploy.tld.com and use DNS so that each “local” client resolves to one of the above. Works like a dream. Then when you upgrade all you have to do is update each of those 3 servers and you are off to the races.

I’m not sure which would be the most cost-effective Azure vs building it out on our own.

It’s either a capital expense every few years or a recurring operating expense the upside of azure is that you can easily geolocate stuff and scale quickly that’s why we went with azure we have 41+ sites all over the world and its hard to physically shelp boxes around also the networking is a nightmare, azure can do site to site point to point and and load balancing all within its own network and things like Azure Express Route which let you extend your networking into theirs.

The downside is that it is more expensive and recurring, but you pay for convenience and the reliability of their services and network.

Can be https too. That’s how Epicor SaaS distributes it - using Azure’s content delivery network. If you use SharePoint online, you can also use the same content delivery network, which is included in your subscription.

Hopefully, for those still using ftp and running WS-FTP from Progress. Make sure it is up to date!!! The threat actors are picking on Progress, who also owns the MoveIT file transfer service that has lead to the largest breaches in history.

Maybe a good idea not to store documents on your transfer services. ![]() Place a copy there and then remove them after a certain amount of time.

Place a copy there and then remove them after a certain amount of time.

@josecgomez This is exactly what I was trying to do. Maybe I’m just not understanding the logistics? How are you getting the client to connect to the “real” app server for work vs knowing when to update from your “deployment” servers? Are there sperate settings in the client sysconfig you are using? I assume then you are manually manipulating the sysconfig each time you upgrade as well so that when the client gets pulled down, the correct sysconfig gets pulled as well? Or are you pushing the full 450MB client to all machines?

The Sysconfig has several settings in it which can be manipulated to accomplish this.

<appSettings>

<!-- Enter the URL of your appserver. Format is "https://serverName/SiteName/ -->

<!-- THIS IS THE APPSERVER URL OR LOAD BALANCER -->

<AppServerURL value="https://loadbalancer.mytld.com/EpicorLive10" />

<AuthenticationMode value="AzureAD" options="Windows|AzureAD|IdentityProvider|Basic" />

<!-- Can set default language code for startup -->

<CultureCode value="enu" />

<!-- SNIP....-->

<deploymentSettings>

<!-- THIS IS THE DEPLOYMENT SERVER -->

<deploymentServer uri="ftp:/deploymentserver.mytld.com/Production/" />

<deploymentType value="zip" options="xcopy|zip" />

<deploymentPackage value="ReleaseClient.zip" />

<!-- only used when deployment type is zip -->

<doDateComparison value="False" bool="" />

<!-- set to false and the deployment will copy all files from the -->

<!-- deployment server, set to true and the deployment will use -->

<!-- date comparison to do the copy -->

<clearClientDir value="Core" options="Never|Always|Prompt|Core" />

<!-- Determines if the client install directory is cleared prior to doing an update -->

<clearDNS value="Always" options="Never|Always|Prompt" />

<!-- Determines if the local client cache is cleared prior to doing an update. -->

<optimizeAssemblies value="false" bool="" />

<!-- this setting requires the user to have admin rights on their machine -->

</deploymentSettings>

<!--SNIP-->

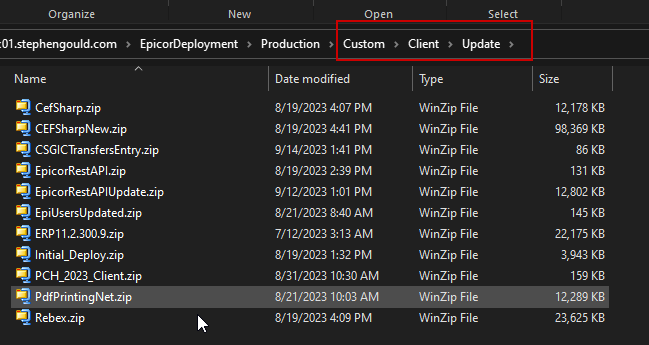

<customizations>

<customization name="ERP11.2.300.9" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="Initial_Deploy" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="EpicorRestAPI" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="CefSharp" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="Rebex" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="CEFSharpNew" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="EpiUsersUpdated" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="PdfPrintingNet" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="PCH_2023_Client" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

<customization name="CSGICTransfersEntry" directoryName="Update" version="11.2.300.9" deploymentType="Zip" />

</customizations>

</appSettings>

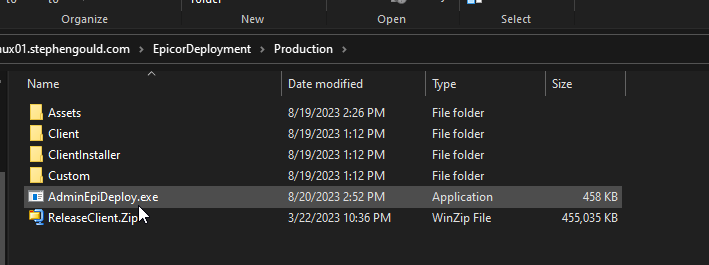

Your deployment just needs to have this structure (to match the original install)

Then it just works, when the clients launch they reach out to

ftp:/deploymentserver.mytld.com/Production/

Look for changes inside /Client/config/EpicorLive10.sysconfig and if it finds some in the customizations section it will download the “update” zip equivalent inside the Custom folder, this is for minor patches and custom DLL deployment

If it finds a difference in the Version Number (major) it will also download the latest ReleaseClient.zip

So when you upgrade you just have to make sure that your deployment server has the latest ReleaseClient, Client (folder) and config as well as any custom Zips (for patches and such)

Not to be a ![]() , but all this work goes away with the browser…when we get there. Of course, it’ll be 100MB of JavaScript, but at least the upgrades should be simpler.

, but all this work goes away with the browser…when we get there. Of course, it’ll be 100MB of JavaScript, but at least the upgrades should be simpler.

Another obvious possibility would be to setup a single appserver and a single SQL server, and then a Terminal Services server on-site, and work through Remote Desktop or RemoteApp connections through the VPN. I think that is probably the lowest cost solution, too… Then your clients can literally be Raspberry PI’s, and there is never any client to update other than the one on the Terminal Services server…