Good morning,

I have a few large DMT processes to run that includes deleting materials, and operations from Part Masters, and eventually open jobs as well. Deleting about 12k materials took about 3 hours, and deleting 98k operations will take 18 hours. I am running at between 55-90 RPM.

We have dedicated cloud tenancy, so I can’t access the server side stuff. Just curious if there is anything I can do to reduce the processing time for DMT.

Thanks!

Nate

There are a few things I look at when I have very long uploads:

- Run DMT on the Epicor AppServer to reduce network lag. Since you’re cloud, this doesn’t apply.

- Check for and disable (if possible) any BPMs on the business objects and tables you’re updating. Especially ones that are more for “everyday” processing that you don’t need to worry about with your data or anything that sends emails. I’ve had some really badly designed BPMs double my upload time when enabled.

- Split the file into multiple uploads. You can run multiple loads at a time and this will sometimes result in faster performance. If you’re working with BOMs and BOOs, though, I don’t know if you can split these up if you’re using the same ECO group for all of them. At the very least, you will be able to have smaller chunks of data to upload that you can review and make sure finishes without your computer deciding to reboot in the middle of a 10-hour overnight upload (this totally didn’t happen to me during an implementation).

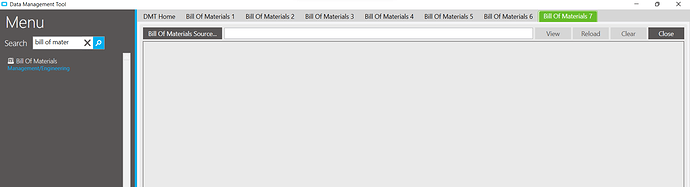

Good idea! I knew we could run sequential processes in playlists, but I didn’t know I could process them simultaneously. How do you tell DMT to process more than one file at a time? Just open more tabs for each process? I am considering cancelling my 18 hour process, and seeing if splitting it up will save time.

Yep. You can just select the option from the tree multiple times and it will open a new, independent tab for each.

This seems great so far! I split up my 98k records into 10k record chunks and it is processing all of them at about 30-50 RPM.

Thank you!!

Nate

This can make a HUGE difference if you’ve got some unsavory BPMs in place. I will often put conditions at the start of BPMs to only execute when callContextClient.ClientType == “WinClient”, that way they don’t fire for DMT or Service Connect, etc… That said, I imagine the new Kinetic UI will use something other than “WinClient”…

That is also a good idea. I don’t have any BPMs getting in the way (that I know of). But I like your sidestepping around DMT by checking the client type.

You can also use a condition for the User ID and then just make sure you use a standard DMT user account. We have a separate ECO group we use when doing DMT uploads and there are a few emails that get skipped on check-in when we use that DMT ECO group.

I tried to use the split import file in the DMT. But it corrupted the output. “Corrupt” might be a bit harsh, but it did not play well with the comment fields I had in my file. The DMT split caused the line breaks in the comments to translate into new cells in the excel file. I ended up splitting the file manually in Excel and it works great.

My delete task for 98k records was going to take 18+ hours. After I split it into 10 files, all were complete in less than 5 hours! That’s the kind of speed boost I was looking for!

Does anyone remember the old “turbo” button on the front of a PC that made your computer go SLOWER (yes it was backwards)?

Anyway… reading this question caused me to remember the old turbo button… But the real answer has been already provided. Split up your large files into multiple files, and do multiple simultaneous imports all at once. This can be done all from the same client as well.

During our initial migration, this is what we did to get our import time down. I actually ended up writing a script that used the DMT’s CLI to automate it since I was doing so many test imports into Pilot. I had between 5 - 10 import processes running per table.

I split it as well. I notice the longer the upload runs, the slower it gets so I do them in smaller files.